(inverted rod) 1978 – 1983

First balancing of an inverted rod by computer vision on an electro-cart has been achieved using observer techniques from systems dynamics by [Meissner 1982]. [Haas 1982] solved the feature extraction part. The first publication in English language was [Meissner und Dickmanns 1983]. The custom-designed microprocessor system BVV_1 of the Institut fuer Messtechnik / UBM / LRT with four microprocessors Intel 8085 for grabbing on-line-selected portions of video frames, and with one processor for data processing after edge extraction was the first system based on general-purpose-microprocessors to perform a closed-loop dynamic real-time vision task in 1981. Spatio-temporal knowledge representation was done by dynamic models capturing the essential dynamic aspects of the system controlled; the measurement model for vision was a pinhole camera with perspective projection.

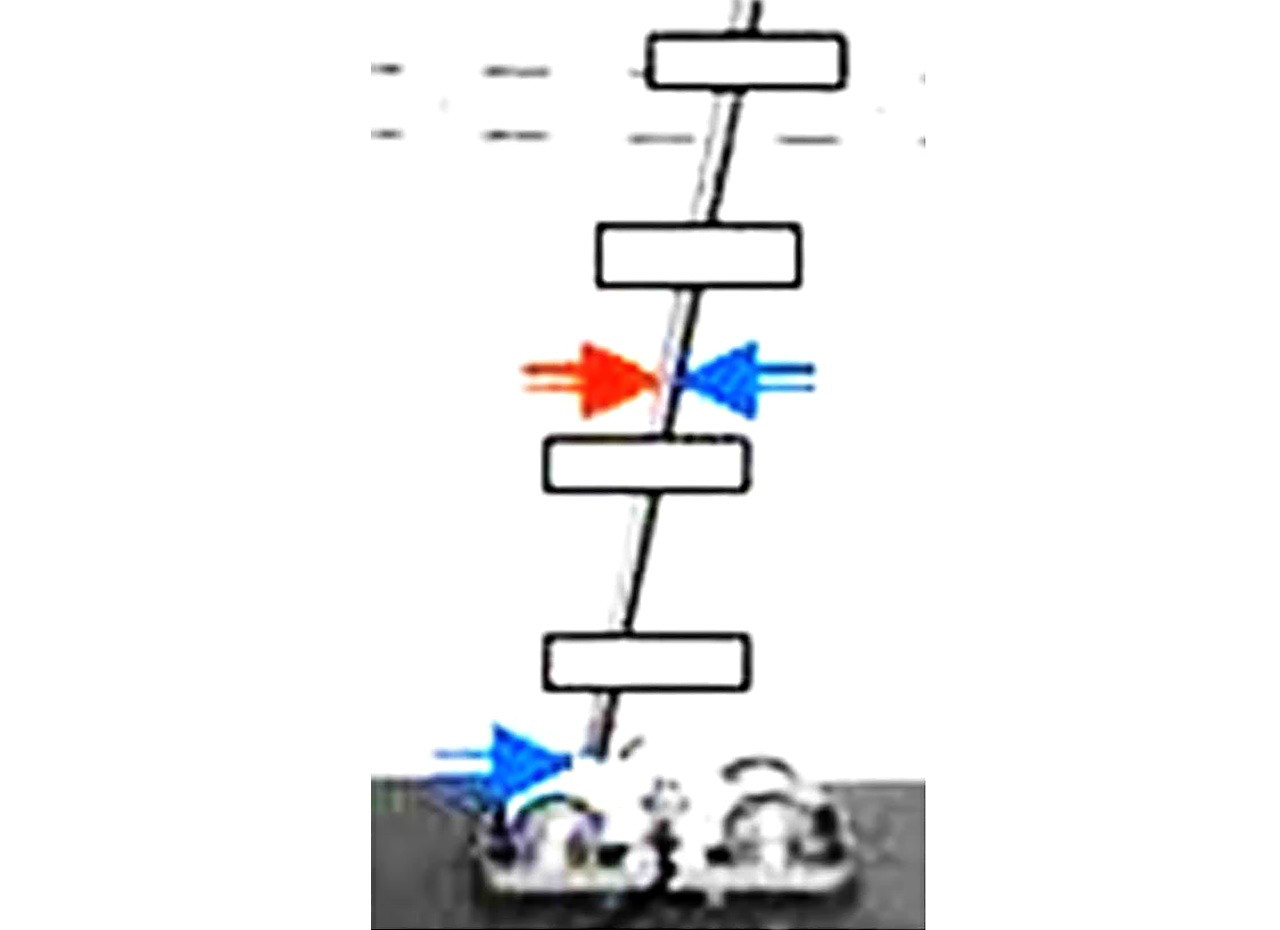

Prediction error feedback for

- perception of the cart position (joint between cart and pole) and

- the angular orientation of the rod (pole),

balanced through a joint at its lower end, was the approach selected right from the beginning. Since the (imagined) blue and red arrows in the center of gravity (cg) of the pole do not change force balance, the force at the foot-point of the pole can be interpreted as resulting in a longitudinal acceleration at the cg of the rod (red arrow) and a torque around the cg given by the blue pair of arrows. Solving the corresponding differential equations leads to expectation-based tracking of edge elements of the rod (see four windows marked in the figure), and to longitudinal control of the cart.

The Newtonian motion laws of translation and rotation represent spatio – temporal knowledge about the process to be observed and controlled. Exploiting these models from engineering and control applications greatly reduced the computing power necessary for real-time computer vision. No previous images needed to be stored and compared to the actual one (the predominant approach at that time); estimation of the state of the system, including the speed components, is achieved by least squares data fits to edge positions in the windows shown.

Edge tracking was done expectation-based, and the only control input was longitudinal acceleration of the cart. With a control engineering background, observer techniques, well known in systems dynamics since the 1960’s, were used for balancing the pole on the cart by vision. The cart was modeled as an integrator plus first order system in one degrees-of-freedom (dof): Longitudinal speed and position have to be controlled by one electric input set by the computer (second order system). The rotation angle of the rod, supported at its lower end by a single-degree-of-freedom joint on the cart (in the vertical plane), was to be controlled by cart acceleration. In order to prevent pole balancing at constant speed, pole position (= joint position = cg-position in equilibrium state) was also prescribed.

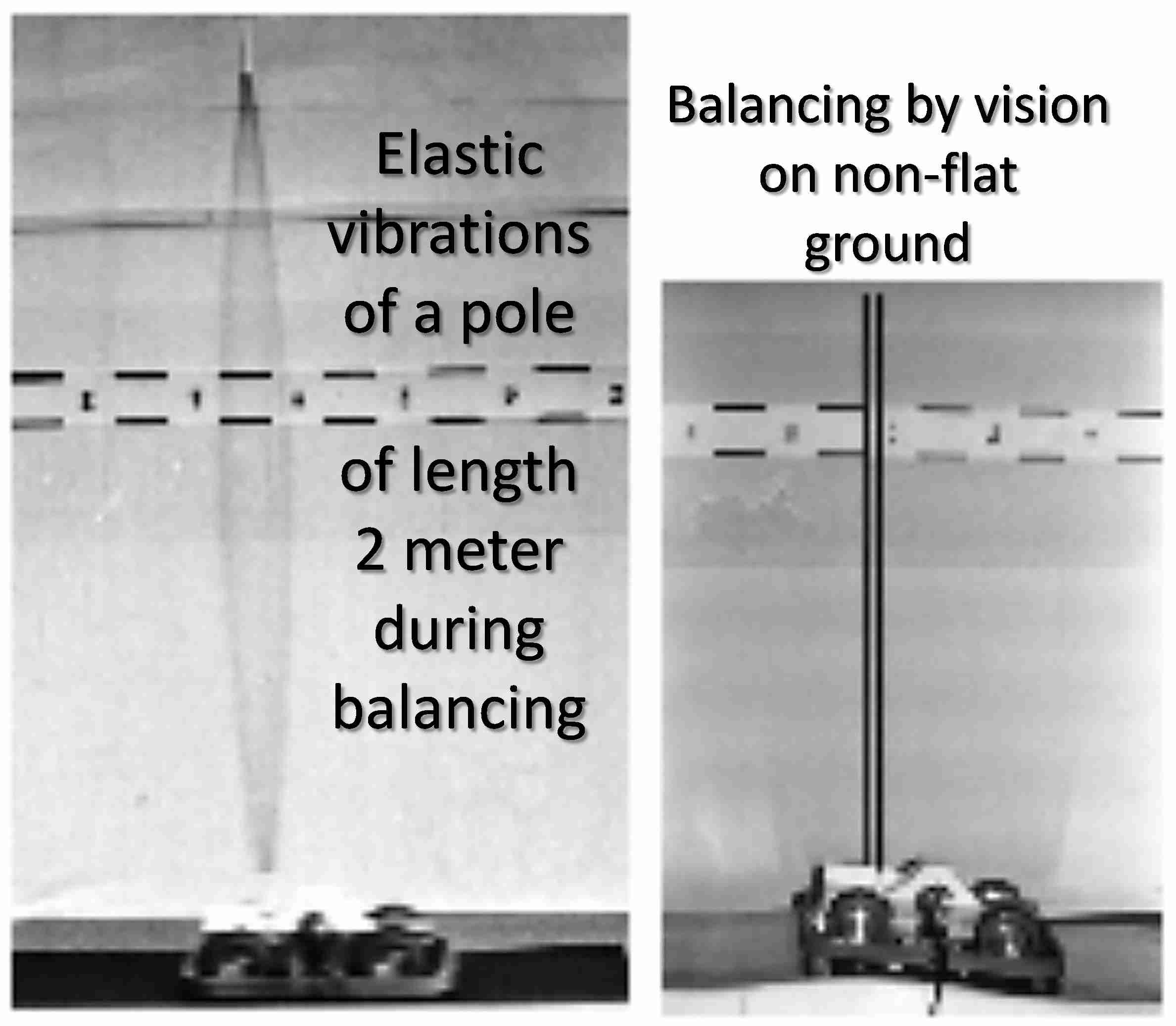

The advantage of physically correct modeling of the motion process is immediately grasped with this example. Finally, even balancing of the pole on a nonplanar surface (left) or with a camera banked up to 15 degrees (right) has been achieved. Poles with lengths L, (0.5 ≤ L ≤ 2 m) have been balanced.

In 1983/84 H.-J. Wuensche [Wuensche 1987] did a comparison between deterministic observer- and stochastic Kalman filter techniques with this vision loop; as a result, the decision was taken to switch to recursive estimation techniques with realistic dynamic spatio-temporal models including uncertainties for vision in general. The improved system was able to balance a rod even though disturbed by a similar looking second rod moved by hand (see sketch at left).

Video – Pole Balancing 1984

References

Meissner HG (1982). Steuerung dynamischer Systeme aufgrund bildhafter Informationen. Dissertation, LRT, UniBwM. Kurzfassung

Haas G (1982). Messwertgewinnung durch Echtzeitauswertung von Bildfolgen. Dissertation, LRT, UniBwM

Meissner HG, Dickmanns ED (1983). Control of an Unstable Plant by Computer Vision. In T.S. Huang (ed): Image Sequence Processing and Dynamic Scene Analysis. Springer-Verlag, Berlin, pp. 532-548

Wünsche HJ (1987). Bewegungssteuerung durch Rechnersehen. Dissertation. LRT, UniBwM. Kurzfassung